System & Settings

Settings & Local AI Configuration

General settings and advanced Local AI configuration for power users.

General Settings

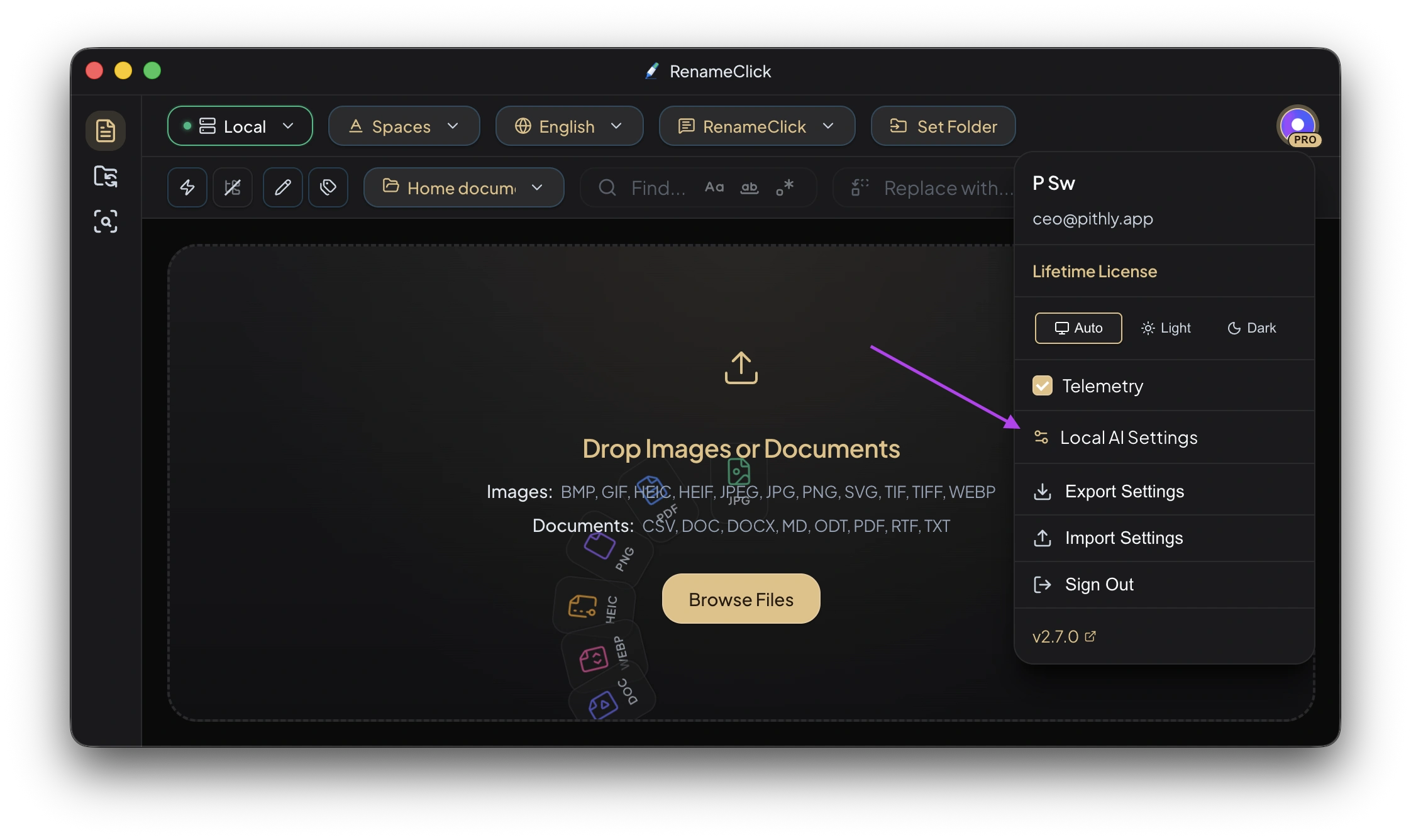

Theme

Light and dark mode support.

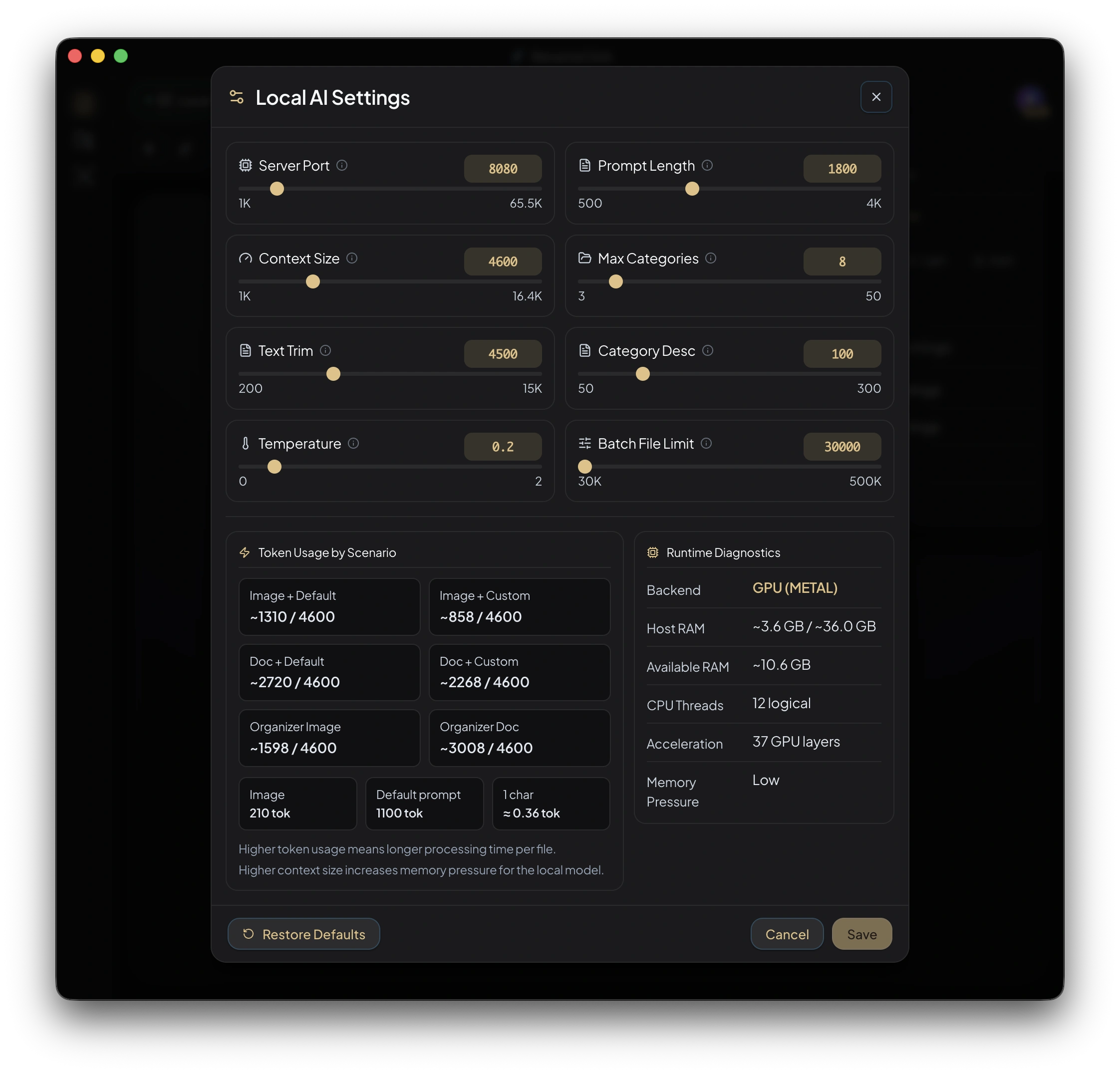

Local AI Settings

The Local AI Settings panel is also a general power-user control panel that affects all providers.

Server Port

| Setting | Value |

|---|---|

| Default | 8080 |

| Fallback | Auto-selects a free port if 8080 is occupied |

Context Size

Controls how much context the local AI model uses for analysis. Larger context = better understanding of long documents, but uses more memory.

Temperature

| Value | Behavior |

|---|---|

| Lower (0.0 - 0.3) | More deterministic, consistent results |

| Higher (0.7 - 1.0) | More creative, varied results |

Prompt Length Limit

| Setting | Value |

|---|---|

| Default | 1,800 characters |

| Range | 500 — 4,000 characters |

Text Trim Limit

Maximum characters extracted from documents before sending to AI. Longer documents are truncated.

| Setting | Value |

|---|---|

| Default | 4,500 characters |

Truncation strategy:

- Head only: First N characters

- Head + Tail: First N/2 and last N/2 characters (better for documents with signatures/summaries at the end)

Max Categories Per Preset

| Setting | Value |

|---|---|

| Default | 8 |

| Range | 3 — 50 |

Category Description Length Limit

| Setting | Value |

|---|---|

| Default | 100 characters |

| Range | 50 — 300 characters |

Batch File Limit

| Setting | Value |

|---|---|

| Default | 30,000 files |

| Range | 30,000 — 500,000 files |

This limit affects Rename workspace, AI Search, and Auto Flow.

Provider Settings

Cloud Providers (OpenAI, Google, Alibaba Cloud)

- Enter API key

- Key validation on entry

- Model selection (when provider offers multiple models)

Local Server Providers (LM Studio, Ollama)

- Set base URL (e.g.,

http://localhost:1234) - Connection status indicator

- Model selection from available models

Data & Privacy

Telemetry

- Sentry crash reporting is opt-in only

- Can be enabled/disabled in settings

All data is stored locally. Cloud providers only receive file content during active processing when you explicitly use them.