AI Providers & Models

Switch between local AI, OpenAI, Google Gemini, Alibaba Cloud, LM Studio, and Ollama.

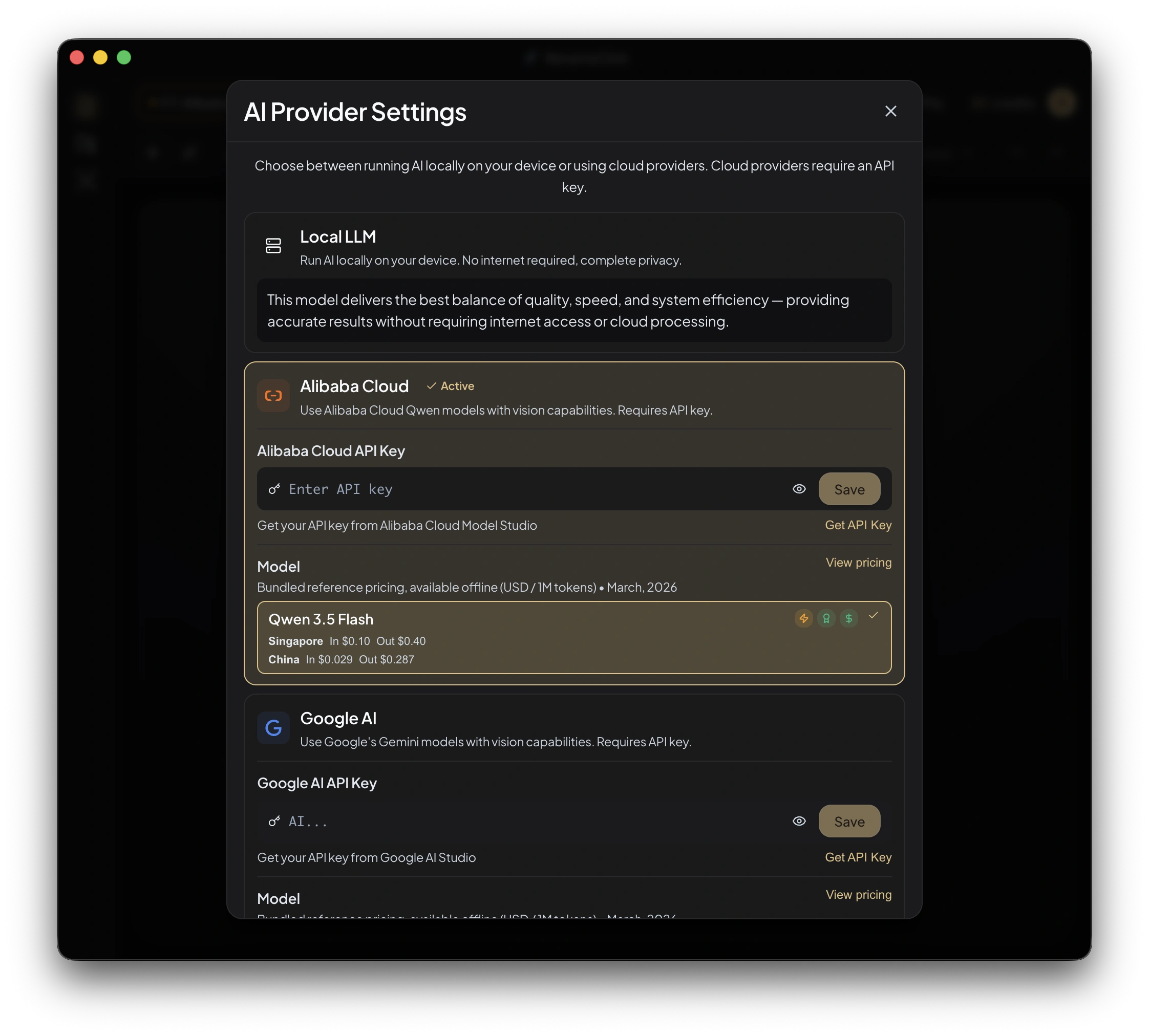

RenameClick supports multiple AI providers for file analysis. You can switch providers at any time based on your preferences for privacy, quality, speed, or cost.

Supported Providers

Local (Built-in)

| Setting | Value |

|---|---|

| Model | Qwen3-VL |

| Type | Vision-language model |

| Engine | llama.cpp (local inference) |

| RAM needed | ~4 GB |

| Internet | Not required (after model download) |

| API Key | Not required |

| Cost | Free |

The local provider is the default and recommended option. After the one-time model download (~4 GB), it runs entirely on your machine.

GPU acceleration:

- macOS: Metal (automatic, no configuration needed)

- Windows: Intelligent auto-detection — NVIDIA/AMD discrete GPU → Vulkan, Intel Arc → SYCL, integrated → CPU, with automatic CPU fallback

OpenAI

| Setting | Value |

|---|---|

| Models | GPT-4o, GPT-4o-mini, and other vision-capable models |

| Internet | Required |

| API Key | Required |

| Cost | Per OpenAI pricing |

Best for: highest accuracy on complex documents, especially multi-language content.

Google Gemini

| Setting | Value |

|---|---|

| Models | Gemini Pro Vision and other Gemini models |

| Internet | Required |

| API Key | Required |

| Cost | Per Google pricing |

Best for: strong vision capabilities, good balance of speed and quality.

Alibaba Cloud (Qwen)

| Setting | Value |

|---|---|

| Models | Qwen-VL and related models |

| Internet | Required |

| API Key | Required |

| Cost | Per Alibaba Cloud pricing |

Best for: excellent multi-language support, especially CJK languages.

LM Studio

| Setting | Value |

|---|---|

| Requirement | LM Studio running locally with a vision-capable model |

| Internet | Not required |

| API Key | Not required |

| Cost | Free |

Setup: Download LM Studio → Load a vision model → Start the local server → Set the base URL in RenameClick (default: http://localhost:1234).

Ollama

| Setting | Value |

|---|---|

| Requirement | Ollama running with a vision-capable model |

| Internet | Not required |

| API Key | Not required |

| Cost | Free |

Setup: Install Ollama → Pull a vision model (e.g., llava) → Set the base URL in RenameClick (default: http://localhost:11434).

Provider Comparison

| Provider | Privacy | Internet | Cost | Quality |

|---|---|---|---|---|

| Local | Full (offline) | No | Free | Excellent |

| OpenAI | Cloud | Yes | Paid | Moderate |

| Cloud | Yes | Paid | Excellent | |

| Alibaba | Cloud | Yes | Paid | Excellent |

| LM Studio | Local | No | Free | Varies by model |

| Ollama | Local | No | Free | Varies by model |

Switching Providers

- Click the Provider selector in the workspace header

- Choose a provider

- If the provider needs configuration (API key, base URL), a settings dialog appears

- The provider status indicator shows readiness (green = ready, red = not ready)

Provider Locking

Provider and model switching is locked when AI Search is running or any Auto Flow row is watching. Stop the active task first, then switch providers.

Offline Mode

When using the Local provider after model download:

- No internet connection needed

- No data leaves your machine

- No API costs

- Works in airplane mode, behind firewalls, or in air-gapped environments